Introduction

History of Artificial Intelligence is a fascinating journey that spans from early computing concepts in the 1940s to advanced AI systems in 2026. It began with theoretical ideas proposed by pioneers like Alan Turing, who questioned whether machines could think. Over the decades, AI evolved through key phases such as the development of symbolic reasoning, machine learning, and neural networks. Despite facing setbacks during the "AI winters," continuous research and technological advancements led to breakthroughs in deep learning, natural language processing, and computer vision. Today, Artificial Intelligence powers applications like virtual assistants, autonomous vehicles, healthcare diagnostics, and smart decision-making systems, shaping the future of industries and human life.

In today’s digital era, Artificial Intelligence (AI) has emerged as one of the most transformative technologies, revolutionizing the way we live, work, and interact with machines. It enables computers and systems to perform tasks that typically require human intelligence, such as thinking, learning, decision-making, and problem-solving.

What is Artificial Intelligence (AI)?

Artificial Intelligence refers to the simulation of human intelligence in machines that are programmed to think, learn from data, and perform tasks autonomously. In simple terms, AI allows machines to mimic human behavior and intelligence.

AI systems are designed to analyze large amounts of data, recognize patterns, and make decisions with minimal human intervention. These systems continuously improve their performance through learning and experience.

To understand how Artificial Intelligence is shaping modern politics and elections, read our detailed article on AI in Politics 2026 Impact on Elections and Democracy .

Examples of AI in Daily Life

- Voice assistants like Google Assistant and Siri

- Chatbots such as ChatGPT

- Recommendation systems on platforms like Amazon and Netflix

- Facial recognition in smartphones and security systems

Core Components of AI

- Machine Learning (ML): Enables systems to learn from data and improve over time

- Deep Learning: Uses neural networks to process complex patterns and data

- Natural Language Processing (NLP): Helps machines understand and process human language

- Computer Vision: Allows machines to interpret and analyze visual information

AI is rapidly expanding across various industries, including healthcare, education, finance, defense, and business. As technology continues to evolve, AI is expected to play an even more significant role in shaping the future.

In this article, we will explore the history, evolution, major milestones, and future potential of Artificial Intelligence in detail.

Early Concepts (1940–1960)

Alan Turing

Alan Turing is widely regarded as the father of Artificial Intelligence (AI). In 1950, he published his famous paper "Computing Machinery and Intelligence", where he posed a fundamental question – "Can machines think?"

To explore this question, he introduced the concept of the Turing Test. This test is designed to determine whether a machine can exhibit intelligent behavior equivalent to that of a human, especially in conversation.

Turing believed that if a machine could communicate in a way that is indistinguishable from a human, it could be considered intelligent. His ideas laid the foundation for modern AI development and continue to influence the field today.

- 1950: Published "Computing Machinery and Intelligence"

- Introduced the Turing Test

- Laid the foundation for Artificial Intelligence

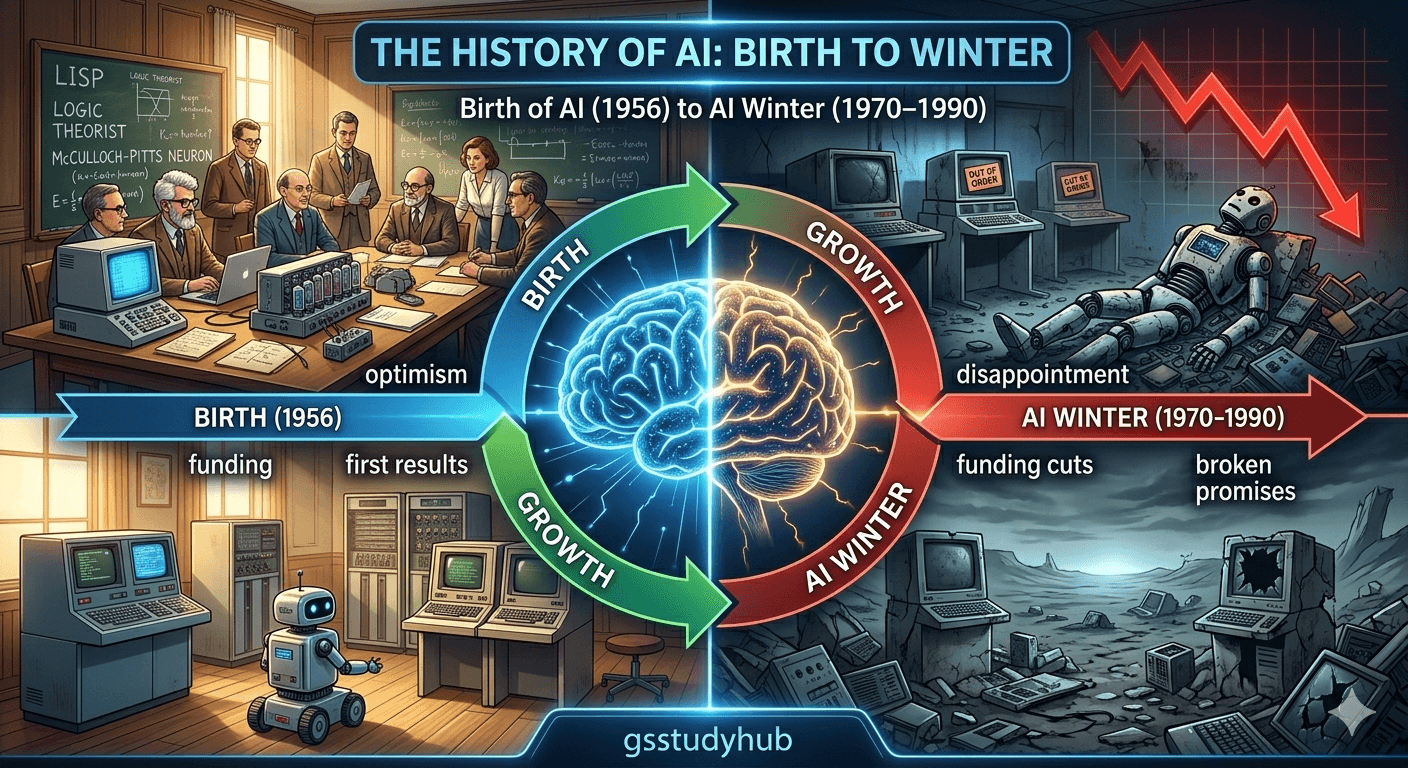

Birth of Artificial Intelligence (1956)

The year 1956 is widely regarded as the official beginning of Artificial Intelligence (AI) as a formal academic discipline. Before this period, ideas related to intelligent machines existed in philosophy, mathematics, and early computing, but there was no unified field dedicated to studying machine intelligence.

This changed with the historic Dartmouth Conference, held in the summer of 1956 at Dartmouth College in the United States. This conference is considered the foundation of AI because it brought together leading researchers who shared a common vision: to create machines that could simulate human intelligence.

The event was organized by John McCarthy, along with other prominent scientists such as Marvin Minsky, Claude Shannon, and Herbert Simon. McCarthy was the first to coin the term "Artificial Intelligence", which gave a clear identity to this emerging field.

The core idea proposed during the conference was revolutionary for its time. Researchers believed that "every aspect of learning or intelligence can, in principle, be so precisely described that a machine can be made to simulate it." This bold statement laid the conceptual foundation for future AI research.

During the conference, discussions focused on several key areas:

- How machines can use language like humans

- How computers can form abstractions and concepts

- Problem-solving and logical reasoning by machines

- Self-improvement and learning from data

Although the technology at the time was limited, the ideas generated during the Dartmouth Conference inspired decades of research. It marked the transition of AI from a theoretical concept to a structured scientific field.

Read Current Affairs 29 March 2026After 1956, universities and research institutions began establishing dedicated AI labs. Funding increased, and early programs were developed to solve algebra problems, prove mathematical theorems, and play simple games. This period is often referred to as the early optimism phase of AI.

In summary, the Dartmouth Conference did not just introduce a new term—it created a new direction for science and technology. It laid the groundwork for modern AI systems, including machine learning, natural language processing, and robotics, which continue to evolve today.

- 1956: Dartmouth Conference organized at Dartmouth College

- Term Introduced: "Artificial Intelligence"

- Key Contributors: John McCarthy, Marvin Minsky, Claude Shannon, Herbert Simon

- Main Vision: Machines can simulate human intelligence

- Impact: AI established as an independent academic and research field

AI Winters (1970–1990)

The period between 1970 and 1990 is known as the “AI Winters” in the history of Artificial Intelligence (AI). During this time, expectations for AI were extremely high, but the actual results failed to meet those expectations. As a result, funding declined, research slowed down, and many AI projects were abandoned.

What was the AI Winter?

AI Winter refers to a phase when governments, companies, and investors lost confidence in AI. Initially, it was believed that machines would soon be able to think like humans, but due to technological limitations, these goals could not be achieved at the time.

Main Causes

- Over-Expectations: Early promises about AI were too ambitious and unrealistic.

- Technological Limitations: Computers had very limited processing power and storage.

- Lack of Data: Insufficient data was available to train AI systems effectively.

- Weak Algorithms: Early algorithms were not capable of solving complex real-world problems.

- Funding Cuts: Governments and organizations reduced investments in AI research.

First AI Winter (1974–1980)

The first AI Winter began around 1974 when many AI projects failed to deliver expected outcomes. Government funding in the UK and the US was significantly reduced. The Lighthill Report (1973) highlighted the limitations of AI, which further decreased confidence in the field.

Second AI Winter (1987–1993)

The second AI Winter was largely caused by the failure of Expert Systems. These systems became popular in the 1980s but were expensive, complex, and lacked flexibility. When their performance did not meet expectations, companies stopped investing in them.

Impact of AI Winters

- Decline in AI research activities

- Closure of many startups and projects

- Negative perception of AI in the tech industry

- Reduced funding and investment

Lessons and Long-Term Importance

AI Winters taught an important lesson: avoid unrealistic expectations from emerging technologies. After this period, researchers adopted a more practical and data-driven approach, focusing on better algorithms, data availability, and computing power. This shift eventually led to the modern success and rapid growth of AI.

- Set realistic goals for technological development

- Strong foundations (data + computing power) are essential

- Continuous research and improvement are key to success

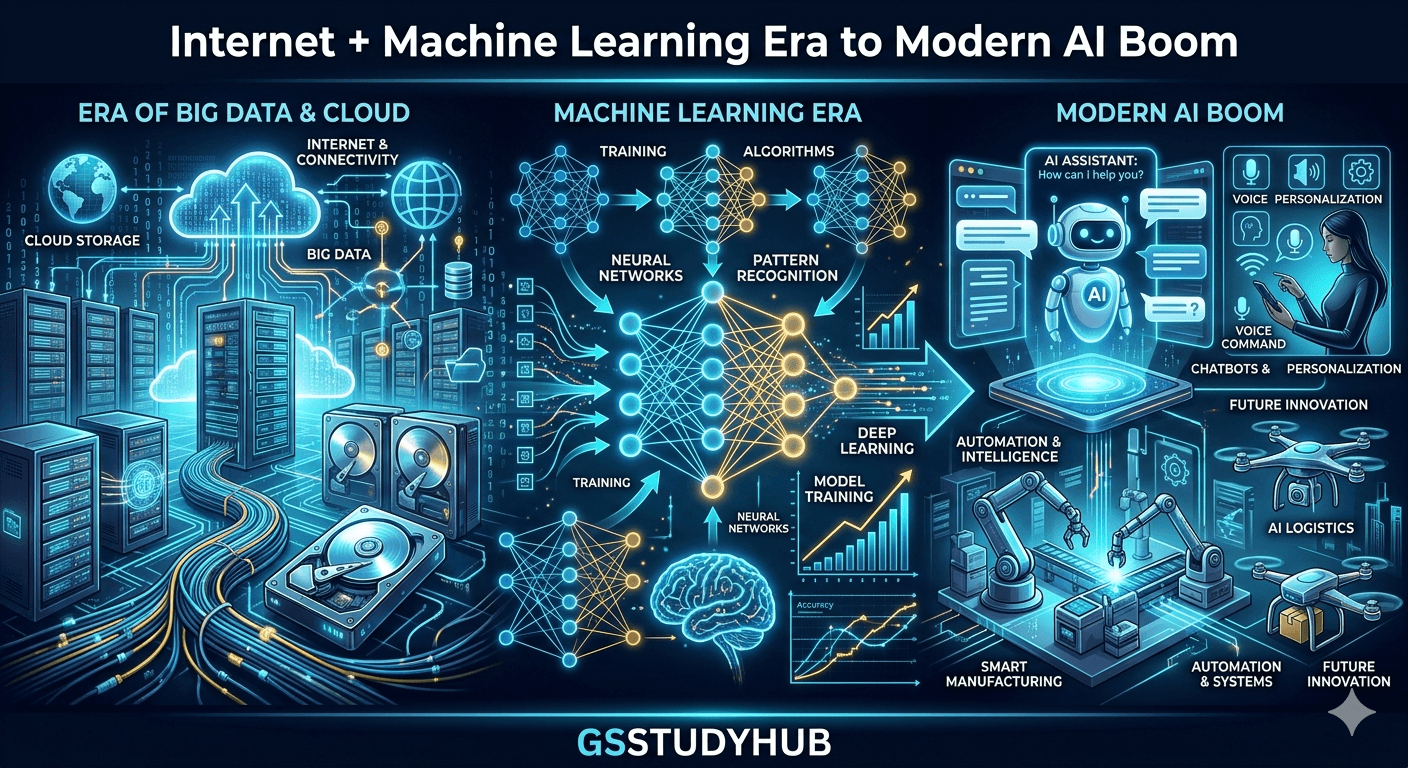

Internet + Machine Learning Era

From the late 1990s to the early 2000s, Artificial Intelligence (AI) entered a new phase known as the “Internet + Machine Learning Era.” During this period, the rapid growth of the internet and the availability of massive amounts of data significantly accelerated the development of AI.

Key Features of this Era

- Rise of Big Data: The internet generated huge volumes of data, enabling better training of AI models.

- Growth of Machine Learning: Traditional rule-based systems were replaced by machine learning algorithms.

- Cloud Computing: Cloud platforms made data storage and processing more efficient and scalable.

- Search Engines & Recommendation Systems: Companies like Google, Amazon, and Netflix used AI to improve user experience.

Major Technological Shift

During this era, AI shifted from rule-based systems to data-driven models. Machine learning techniques such as supervised learning, unsupervised learning, and reinforcement learning became widely adopted.

Important Examples

- Search Engines (e.g., Google) – Delivering more accurate search results

- E-commerce Platforms – Personalized product recommendations

- Social Media – Content feeds and targeted advertising

- Spam Filters – Improving email security

Impact of this Era

- Rapid growth in commercial applications of AI

- Increased importance of data

- Expansion of technology companies

- Improved user experience (UX)

Conclusion

The Internet + Machine Learning Era transformed AI from a purely experimental field into a practical and commercially viable technology. It laid the foundation for modern AI advancements, including deep learning and intelligent automation.

Modern AI Boom

ChatGPT

ChatGPT, developed by OpenAI, represents a major breakthrough in Natural Language Processing (NLP). It is based on advanced Generative AI and large language models (LLMs), capable of understanding and generating human-like text.

ChatGPT can perform a wide range of tasks such as answering questions, writing content, coding assistance, translation, and more. Its conversational ability has made AI more accessible and useful for everyday users, businesses, and developers.

- Launched in 2022

- Based on GPT (Generative Pre-trained Transformer) models

- Widely used in education, business, and content creation

Google has played a significant role in the Modern AI Boom through its innovations in AI research and applications. Products like Google Search, Google Assistant, and Google Translate use AI to enhance user experience.

Google has also developed advanced AI models such as BERT and Gemini, which improve language understanding, search accuracy, and AI-driven services.

- Leader in AI research and development

- Developed models like BERT and Gemini

- AI integrated into everyday products and services

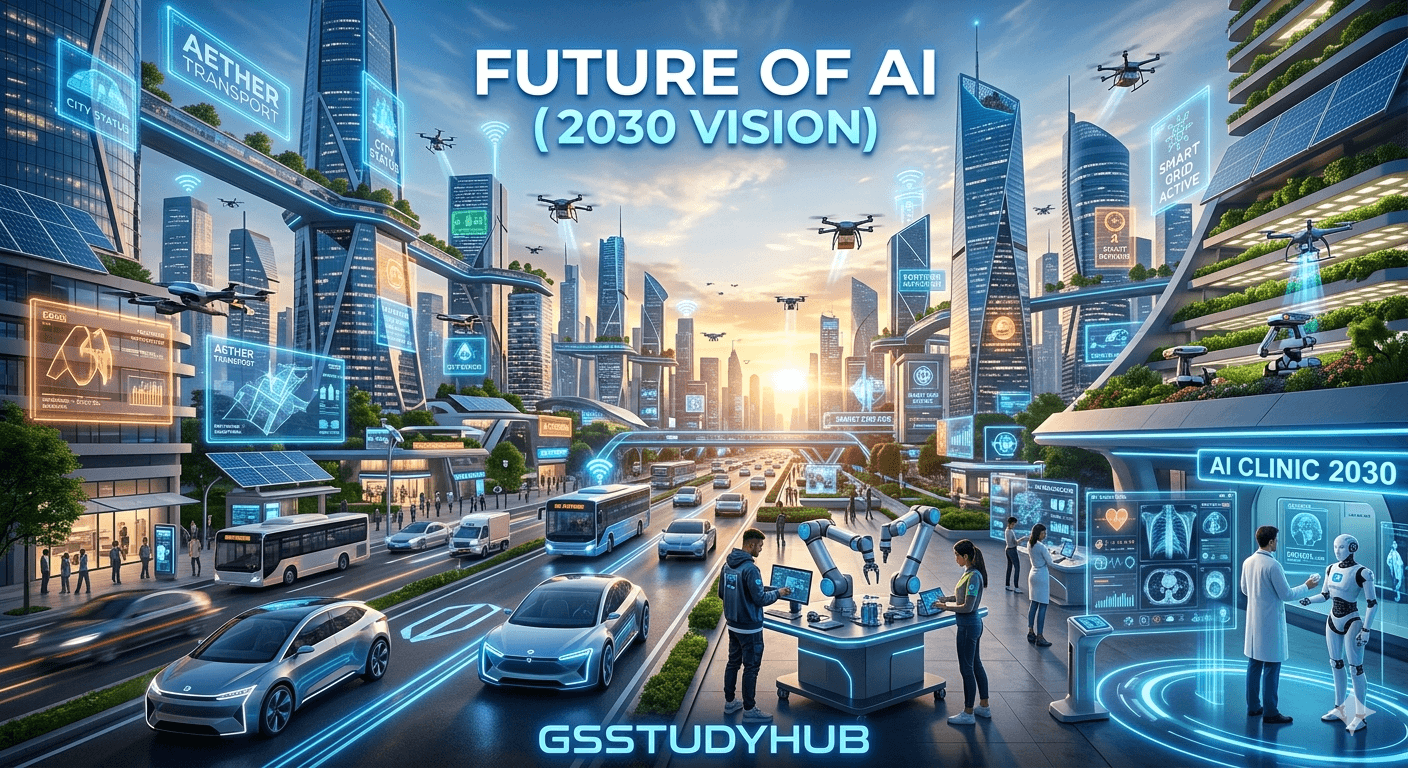

Future of AI (2030 Vision)

In the coming years, especially by 2030, Artificial Intelligence (AI) has the potential to transform the world in unprecedented ways. The Future of AI (2030 Vision) imagines a world where AI plays a central role in almost every sector, making human life smarter, faster, and more efficient.

Key Trends for the Future

- Expansion of Automation: AI and robotics will automate a large number of tasks across industries.

- Human-AI Collaboration: Humans and machines will work together to enhance productivity and innovation.

- Smart Cities: AI-driven systems will manage traffic, energy, and security more efficiently.

- Personalized Services: Highly customized experiences in education, healthcare, and e-commerce.

Opportunities Across Sectors

- Healthcare: Faster and more accurate diagnosis with AI-assisted systems.

- Education: Personalized learning experiences for every student.

- Agriculture: Smart farming using AI, drones, and data analytics.

- Transport: Self-driving vehicles and intelligent traffic systems.

Potential Challenges

- Impact on Jobs: Automation may replace certain types of employment.

- Data Privacy: Ensuring the security of user data will be critical.

- Ethical Concerns: Responsible use of AI must be ensured.

- Security Risks: Misuse of AI could increase cyber threats.

Expected Changes by 2030

- AI will become a part of everyday life

- Many processes will be fully automated

- Better integration of digital and physical worlds

- Creation of new industries and job opportunities

Conclusion

By 2030, AI is expected to become a driving force of human progress. If used responsibly and ethically, it can create a more advanced, efficient, and sustainable future for society.

Conclusion

the History of Artificial Intelligence reflects a remarkable journey of innovation that continues to shape the future of technology and human life.

The evolution of Artificial Intelligence (AI) has been a long and transformative journey, starting from early concepts in the 1940s to the modern AI era we see today. From the ideas of Alan Turing to the Dartmouth Conference, through the periods of AI Winters and the rise of the Internet and Machine Learning, AI has now become an integral part of our daily lives.

Today, AI is driving revolutionary changes across sectors such as healthcare, education, business, agriculture, and technology. It not only makes processes faster and more efficient but also creates new opportunities and possibilities.

📌 Latest Current Affairs

Stay updated with the most important national and international events for exam preparation. Our latest current affairs cover defence, economy, science, and global developments in a simple and exam-focused format.

Read Current Affairs – 29 March 2026 →However, AI also brings certain challenges, including its impact on jobs, data privacy concerns, and ethical issues. Therefore, it is essential to use AI responsibly and ensure a balanced approach to its development and implementation.

Looking ahead, especially towards 2030, AI is expected to make human life more advanced, intelligent, and convenient. If used wisely, AI has the potential to play a crucial role in shaping a better and more progressive future for society.

- AI development is continuously evolving

- Its impact is expanding across all industries

- Responsible and ethical use is essential

- AI will become even more powerful in the future

Frequently Asked Questions (FAQ)

1. What is Artificial Intelligence (AI)?

Artificial Intelligence (AI) is a technology that enables machines and computer systems to simulate human intelligence, including learning, reasoning, and decision-making.

2. In which fields is AI used?

AI is used in various sectors such as healthcare, education, banking, agriculture, e-commerce, transportation, and defense.

3. What is Machine Learning?

Machine Learning is a subset of AI where machines learn from data and improve their performance without being explicitly programmed.

4. What were AI Winters?

AI Winters were periods when expectations from AI were not met, leading to reduced funding and a slowdown in research and development.

5. Will AI replace human jobs?

AI may replace some jobs, but it also creates new job opportunities and career paths.

6. Is AI safe?

AI can be safe if it is developed and used responsibly, following ethical guidelines.

7. What is the future of AI?

The future of AI is very promising. By 2030, it is expected to play a major role in various industries and make life more efficient and convenient.

8. What is Deep Learning?

Deep Learning is an advanced form of machine learning that uses neural networks to analyze complex data and patterns.

9. Can AI replace humans?

AI can assist humans but cannot fully replace them, as humans possess emotions, creativity, and critical thinking abilities.

10. How can I start learning AI?

To start learning AI, you should focus on mathematics, programming (such as Python), and basic concepts of machine learning.

References

- Turing, Alan (1950) – Computing Machinery and Intelligence, Mind Journal

- McCarthy, John (1956) – Dartmouth Conference on Artificial Intelligence

- Lighthill Report (1973) – Artificial Intelligence: A General Survey

- Russell, Stuart & Norvig, Peter – Artificial Intelligence: A Modern Approach

- AI research papers from institutions like MIT and Stanford

- Official publications and research from Google AI and OpenAI

- Online courses on Machine Learning and Deep Learning (Coursera, edX, etc.)

- Trusted technology journals and organizations (IEEE, ACM, etc.)